CedarDB: Catching Up on Recent Releases

This post takes a closer look at some of the most impactful features we have shipped in CedarDB across our recent releases. Whether you have been following along closely or are just catching up, here is a deeper look at the additions we are most excited about.

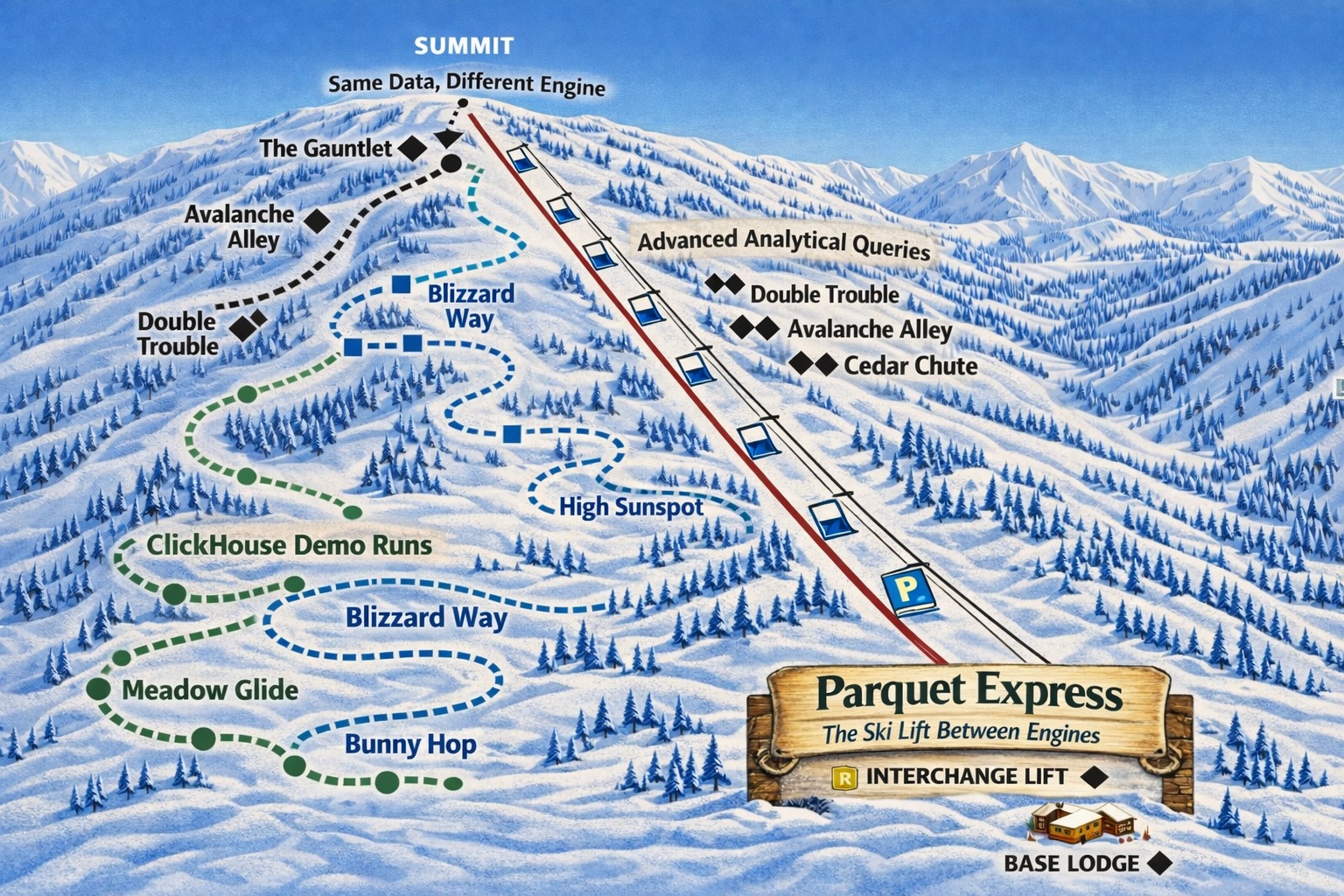

Parquet support: Your On-Ramp to CedarDB

v2026-03-03

Due to the significant compression facilitated by its columnar format, Parquet has become quite popular in the past several years. Today we find Parquet in projects like Spark, DuckDB, Apache Iceberg, ClickHouse, and others. Because of this, Parquet has become not only a data format for running analytical queries, but also a handy format for data exchange between OLAP engines. CedarDB is now able to read Parquet data and directly load it into its own native data format with a single SQL statement:

postgres=# select * from 'data.parquet' limit 6;

id | name | email | age | city | created_at

----+---------------+-------------------+-----+-------------+------------

1 | Alice Johnson | alice@example.com | 29 | New York | 2024-01-15

2 | Bob Smith | bob@example.com | 34 | Los Angeles | 2024-02-20

3 | Clara Davis | clara@example.com | 27 | Chicago | 2024-03-05

4 | David Lee | david@example.com | 41 | Houston | 2024-04-18

5 | Eva Martinez | eva@example.com | 23 | Phoenix | 2024-05-30

6 | Frank Wilson | frank@example.com | 38 | San Antonio | 2024-06-12

(6 rows)

CREATE TABLE my_table as SELECT * from 'data.parquet';

This makes migrating from other OLAP systems, or ingesting data from your data lake, straightforward. Check out our Parquet Documentation and our hands-on comparison of CedarDB vs. ClickHouse using StackOverflow data, where CedarDB was 1.5-11x faster than ClickHouse after a Parquet-based migration.

Better Compression, Less Storage: Floats and Text

v2026-01-22 and v2025-12-10

Storage efficiency has seen a major leap on two fronts. For floating-point columns (i.e., of type REAL and DOUBLE PRECISION ), CedarDB now applies Adaptive Lossless Floating-Point compression (ALP), a state-of-the-art technique that halves the on-disk footprint (on average) - perfect for workloads heavy on sensor readings or metrics.

For TEXT columns, we’ve adopted FSST (Fast Static Symbol Tables) combined with dictionary encoding: in practice this can halve the storage size of text-heavy tables while also making queries faster, since less data needs to be read from disk. Read our deep-dive blog post on the FSST implementation here.

without DictFSST:

postgres=# SELECT 100 * ROUND(SUM(compressedSize)::float / SUM(uncompressedSize), 4) || '%' as of_original FROM cedardb_compression_info WHERE tableName = 'hits' AND attributeName = 'title';

of_original

-------------

21.83%

(1 row)

with DictFSST:

postgres=# SELECT 100 * ROUND(SUM(compressedSize)::float / SUM(uncompressedSize), 4) || '%' as of_original FROM cedardb_compression_info WHERE tableName = 'hits' AND attributeName = 'title';

of_original

-------------

13.42%

(1 row)

Improve Parallelism of DDL and Large Writes

v2026-02-03

Schema changes and parallel bulk loads no longer bring your database to a halt. CedarDB now supports DDL operations like ALTER TABLE and CREATE INDEX on different tables in parallel. Similarly, large INSERT , UPDATE and DELETE operations now also run in parallel. None of these operations impact parallel readers anymore. For teams running mixed OLTP/OLAP workloads, this is a significant quality-of-life improvement.

PostgreSQL Advisory Locks

v2025-11-06

CedarDB now has full support for PostgreSQL advisory locks, including blocking variants that wait until a resource is freed and automatic deadlock detection. This fills an important compatibility gap for operational applications that use advisory locks for custom mutual exclusion either to implement custom functionality (think job schedulers or distributed task queues) or require them for correctness guarantees (think schema migration tools). Check out our advisory locks documentation for more info.

SELECT pg_advisory_lock(123);

-- ... do work ...

SELECT pg_advisory_unlock(123);

Late Materialization

v2026-01-15

Internally, CedarDB now delays fetching column data until it’s actually needed, a technique called late materialization. When a query filters out most rows early, this means only the surviving rows ever have their full column data fetched from storage. The result is faster queries on wide tables, with less wasted I/O. This improvement is transparent: no changes required to your queries or schema.

In the example below, a table scan is reduced from reading 24 GB on disk to just 75 MB.

Before:

postgres=# explain analyze SELECT * FROM hits WHERE url LIKE '%google%' ORDER BY eventtime LIMIT 10;

plan

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------

🖩 OUTPUT () +

▲ SORT (In-Memory) (Result Materialized: 626 KB, Result Utilization: 83 %, Peak Materialized: 635 KB, Peak Utilization: 83 %, Card: 10, Estimate: 10, Time: 0 ms (0 %))+

🗐 TABLESCAN on hits (num IOs: 29'046, Fetched: 24 GB, Card: 853, Estimate: 67'688, Time: 10632 ms (100 % ***))

(1 row)

Time: 10651.826 ms (00:10.652)

After:

postgres=# explain analyze SELECT * FROM hits WHERE url LIKE '%google%' ORDER BY eventtime LIMIT 10;

plan

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

🖩 OUTPUT () +

l LATEMATERIALIZATION (Card: 10, Estimate: 10) +

├───▲ SORT (In-Memory) (Result Materialized: 29 KB, Result Utilization: 58 %, Peak Materialized: 34 KB, Peak Utilization: 60 %, Card: 10, Estimate: 10, Time: 10 ms (8 % *))+

│ 🗐 TABLESCAN on hits (num IOs: 20, Fetched: 75 MB, Card: 482, Estimate: 67'688, Time: 116 ms (92 % ***)) +

└───🗐 TABLESCAN on hits (Estimate: 10)

(1 row)

Time: 139.806 ms

New Types: Unsigned Integers, UUIDv7, and Enums

v2025-11-06, v2025-10-22, and v2026-01-22

Three type additions make CedarDB more expressive:

- Unsigned integers (

UINT1 - UINT8): Useful for importing data from Parquet, which also has native unsigned types. Also the right fit for any domain where negative values are nonsensical (counts, IDs, IP ports, flags). No more workaround with larger signed types! - UUIDv7: A newcomer from Postgres 18. Unlike UUIDv4, UUIDv7 embeds a timestamp and is monotonically increasing, making it index-friendly and suitable as a primary key without the index fragmentation problems of random UUIDs. Check out our UUID Documentation for more info.

- Enum types: Columns can now be declared with a fixed set of string values, saving storage and making constraints explicit at the type level. Check out our Enum Documentation for more info.

CREATE TYPE order_status AS ENUM ('pending', 'processing', 'shipped', 'delivered', 'cancelled');

CREATE TABLE orders (

id UUID PRIMARY KEY DEFAULT uuidv7(),

status order_status NOT NULL DEFAULT 'pending',

quantity UINT4 NOT NULL,

total_cents UINT4 NOT NULL

);

INSERT INTO orders (status, quantity, total_cents) VALUES ('pending', 2, 1999), ('processing', 1, 4999), ('shipped', 5, 2495);

postgres=# select * from orders;

id | status | quantity | total_cents

--------------------------------------+------------+----------+-------------

019d0701-cb64-7c96-b90c-ebd4a4410dee | pending | 2 | 1999

019d0701-cb65-7c49-8a1a-2e4ea96a85b1 | processing | 1 | 4999

019d0701-cb65-74ce-a987-b72905eb7f7a | shipped | 5 | 2495

(3 rows)

PostgreSQL Compatibility: Moving Fast

CedarDB’s PostgreSQL compatibility continues to expand rapidly. Recent months have brought new SQL grammar support, a growing roster of system functions and un-stubbed catalog tables, and bug-for-bug compatibility fixes that allow more existing PostgreSQL tooling to work out of the box.

If a tool or library failed to connect or misbehaved in a previous version, it’s worth trying again - the list of working clients and frameworks is growing steadily. If your favorite tool isn’t working yet, please let us know by creating an issue.

That’s it for now

Questions or feedback? Join us on Slack or reach out directly.

Do you want to try CedarDB straight away? Sign up for our free Enterprise Trial below. No credit card required.