Simple, Efficient, and Robust Hash Tables for Join Processing

Hash tables are probably the most versatile data structures for data processing. For that reason, CedarDB depends on hash table to perform some of the most crucial parts of its query execution engine. Most prominently, CedarDB implements relational joins as hash joins. This blog post assumes you know what a hash join is. If not, the Wikipedia article has a short introduction into the topic for you. During the development of Umbra and now CedarDB, we rewrote our join hash table implementation several times. To share our latest design, TUM and CedarDB published a peer-reviewed scientific paper, which Altan will present at DaMoN'24 in Santiago de Chile next week.

While the paper is an excellent read by itself, this blog post will offer a more approachable description to the fundamental ideas behind our join implementation. In the following, we’ll:

- explain the implementation details of the most efficient hash join, which powers CedarDB,

- show you how we optimized the hot path of our hash table to only 10 instructions,

- and how such a hash table scan can scale robustly for huge and skewed workloads.

Why Off-The-Shelf Hash Tables Don’t Cut It

Since hash tables are such a common data structure, there are already many high-quality hash table implementations. For general-purpose C++ implementations, abseil, ankerl, boost, and folly, are popular choices. Unfortunately, none of them are a good fit for processing joins in database systems, where we have a set of rather unique and demanding requirements:

- Parallel data processing. A join commonly processes millions of rows. To do this efficiently, it needs to fully use all hardware resources.

- Efficiently filtering non-matching rows. Queries typically look for specific data. Consequently, most of the input data of a join will be filtered out. Joins should thus be optimized for the case where no matching partner is found.

- Be robust against duplicate skew. Almost all joins are over foreign-key relations: For each key on one side of the join, there are potentially hundreds of matching keys on the other side, forcing us to use multimap semantics. General purpose multimaps, however, often struggle with skew, where some keys are referenced much more often than others.

This does not mean that they are bad, they just tend to optimize for a use case much more common outside of database systems: storing and retrieving data from a lookup structure.

Parallel Processing

For database systems, the overhead of parallelizing algorithms is almost always worth it, since queries commonly process millions of rows. When spreading the work over all cores by processing chunks of data in parallel, we can thus commonly get a 100× speedup. Regular hash tables, however, do not support parallel inserts and would need expensive global locks. While parallel hash tables do support parallel inserts, they are usually slower for lookups, since they need to handle the general case which concurrently resizes the hash table. For a hash join, we can efficiently separate the workload into two phases:

- In the build phase, we only insert the tuples of the smaller side of the join.

- In the probe phase, we only check for each tuple of the larger side if we find a match.

Since we don’t mix inserts and lookups, we can make the read-only probe much more efficient. In practice, we split the join into three phases, with synchronization points between them:

- Each execution thread buffers its rows for the hash table’s build side locally.

- All threads insert their buffers into the hash table in parallel (tricky).

- All threads probe the hash table with the other side of the join (trivially parallel).

Phase 1 is required to know how large the hash table needs to be.

With the exact number of elements known, we can directly allocate a perfect-fit table and ensure it never needs to be

resized.

Phase 2 is trickier, since we want to write to the hash table in parallel, which needs to be synchronized.

For most hash tables, this would require locking, which would become a bottleneck.

Our design uses a chaining hash table, where parallel insertion is essentially a parallel insert into a linked list.

The synchronization of these inserts can be implemented efficiently

lock-free with an atomic_compare_exchange loop.

This is a common technique, and other systems like DuckDB use

the same strategy for parallel

inserts:

// Insert newEntry into the chaining hash table slot

Entry* current = slot.load();

do {

newEntry->next = current->next;

} while (!slot.compare_exchange_weak(current, newEntry));

Alternatively, one can also partition the tuples in Phase 1. Then, threads can independently perform inserts which only touch their part of the table. Unfortunately, this does not work for linear probing schemes, which are popular for general-purpose hash tables: they resolve collisions by probing neighboring slots, which could cross a partition boundary. To efficiently build a table within the partitions, each slot needs to correspond to exactly one partition. Again, chaining solves this problem, but we can avoid the atomic instructions and the potential contention and simply do linked-list insertions. In practice, both approaches work well. While partitioning can have slightly better cache locality, the most important part is executing this phase in parallel.

Finally, we probe the hash table in phase 3. This phase is read-only, and we do not need any synchronization. Optimizing this step, however, is important as the probe side is usually much larger, and execution spends most of the time here.

Efficient Filtering of Non-matching Rows

The probe phase of join processing is the hot path of join processing. While databases store huge amounts of data, not everything is interesting for a query. Usually, only a part of your data is interesting, e.g., the cute catgirls, and not the TPS reports. Probing the hash table, thus, will only find a join partner every couple of dozen lookups. Most general-purpose hash tables optimize for the “happy” case, assuming it usually contains an entry for the key you are looking for. In contrast, our hot path is the non-matching case. And for that path, we need to make every instruction count. The following technique can efficiently filter non-matching keys in just 10 x86 instructions:

To efficiently discard non-matching values, we use the hash of these values to:

- find the corresponding slot in the table,

- and a Bloom filter containment check1.

We hash keys to 64 bit, but only use a fraction to find the slot in the hash table. Therefore, we can re-use unrelated bits for an additional Bloom filter check without needing to compute another hash. In our hash table layout, we use the upper bits to find the slot in a pointer directory, and the lower bits to check Bloom filter tags, which we embed in the pointers. Since the upper 16-bits of 64-bit pointers are unused, we can squeeze 16-bit tags into the pointers with no space overhead. This fused layout has the additional advantage to double as a nullptr check.

u64 shift; // Used to reduce a hash to a directory slot

u64 directory[1 << (64 - shift)];

void lookup(K key, u64 hash) {

u64 slot = hash >> shift; // shr

u64 entry = directory[slot]; // mov

if (!couldContain((u16)entry, hash)) return; // jnz

// probably found a match, check the key

}

u16 tags[1 << 11] = {15, 23, 27, 29, 30, 39, ...};// Precalculated 4 bit Bloom filter tags

bool couldContain(u16 entry, u64 hash) {

u16 slot = ((u32) hash) >> (32 - 11); // shr

u16 tag = tags[slot]; // mov

return !(tag & ~entry); // andn

}

We choose the size of the directory as the next power of two larger than our dataset, which allows efficiently calculating the slot with a single bit shift. The entry of this slot then contains the Bloom filter in the lower 16-bits, which simplifies the containment check, as truncating to those bits is a noop on x86. As an additional optimization, we do not construct the tag of the hash on the fly, but use a constant 4kB lookup table with pre-calculated Bloom tags, which have four bits set. We can then test if all the Bloom tag bits match with an and-not instruction. Without the lookup table, calculating a 4-bit tag takes over a dozen instructions. In our implementation, the Bloom filter check only needs three instructions, and also implicitly checks for a null value. With this 4-bit Bloom filter, we get a false-positive rate of <1%, which significantly speeds up our fast path.

Hashing

Hashing values is surprisingly expensive. Since we use many of the hash bits, we need a well-distributed hash over all bits. For hash functions, FNV-1a is fast but produced too many collisions in our tests. The xxh3 avalanche has good quality but needs more instructions by itself than all other code of our lookup combined. We instead use a custom hash function based on CRC, as it can achieve a good collision resistance with very few instructions. Most modern CPUs have dedicated instructions for calculating CRCs, which results in incredibly compact code to calculate a hash.

u64 hash32(u32 key, u32 seed) {

u64 k = 0 x8648DBDB; // Mixing constant

u32 crc = crc32(seed, key); // crc32

return crc * ((k << 32) + 1); // imul

}

Of course, we need a CRC instruction for every 32 bits of the input, but the result is still a very compact instruction sequence. Having compact code for hashing is especially important to keep our hot loop tight:

When we have a small hash table, the whole hash table fits into the CPU cache. Therefore, we are CPU bound, and reducing the number of instructions directly reduces execution time. For a large hash table, the slowest part of the hash table lookup is the random memory access to the slot. One would expect that the number of executed instructions would not matter as much in this case as we are dominated by random memory access. However, our compact hot loop enables the out-of-order execution of modern CPUs to go crazy: The reorder buffer of a modern CPU microarchitecture fits several hundred instructions, which means that we automatically get dozens of concurrent lookups, which hides the memory access latency.

Handling Skew

In a join, each tuple can have multiple matches, forcing us to use an unordered_multimap, instead of just

an unordered_map.

For multimaps, naive linear probing is very vulnerable to skew, i.e., when many values have the same key.

These duplicates then fill up a whole region of the hash table, and any lookup to any of the collided slots would have

false-positive hits.

A hash table using chained entries does not have this problem, since all duplicate values will be put into the same slot, which compartmentalizes the collision by just extending the slot’s chain instead of making the whole table unusable. However, we now have a long linked list, which we need to traverse to find the real matches. This is particularly relevant for graph queries, e.g., finding friends of friends, where joins involving hub nodes will trigger repeated walks of these long linked lists.

To solve this issue, we can combine the pointer array of a chaining hash table, which gives us collision resistance and a low false positive rate with Bloom filters, and the dense storage layout of a linear probing scheme. In the paper, we call this layout unchained, which gives us the best of both worlds.

Results

Since a database system executes many different user-specified queries, a hash join implementation shouldn’t be optimized for one specific case, but improve the average runtime over all of our users’ workloads. To evaluate if our approach works well, we compare a hash table built upon these ideas against a hash table using simple chaining, as well as a Robin Hood hashing scheme. To capture a wide range of use-cases, we ran over 10000 different queries from five different benchmarks, which we ran on a standard workstation with an AMD Ryzen 5950X CPU and 64GB RAM.

We start with a comparison between chained and unchained, since we developed the unchained layout specifically for graph workloads where we observed expensive long linked-list traversals. Indeed, when we look at the several thousand graph queries in the scatter plot below, we see that we can speed up the long-running graph queries by up to 16×.

Zooming back out, a hash table optimized for graph queries is only useful as central hash table of a database system if it also performs well on non-graph queries. After all, there are some ideas like worst-case optimal joins, which can potentially speed up graph queries, but they have virtually no adoption, since they make most other joins significantly slower. When we look at TPC-H as a relational workload, we see that our unchained hash table can improve slightly on a chained hash table and is significantly faster than an open addressing scheme.

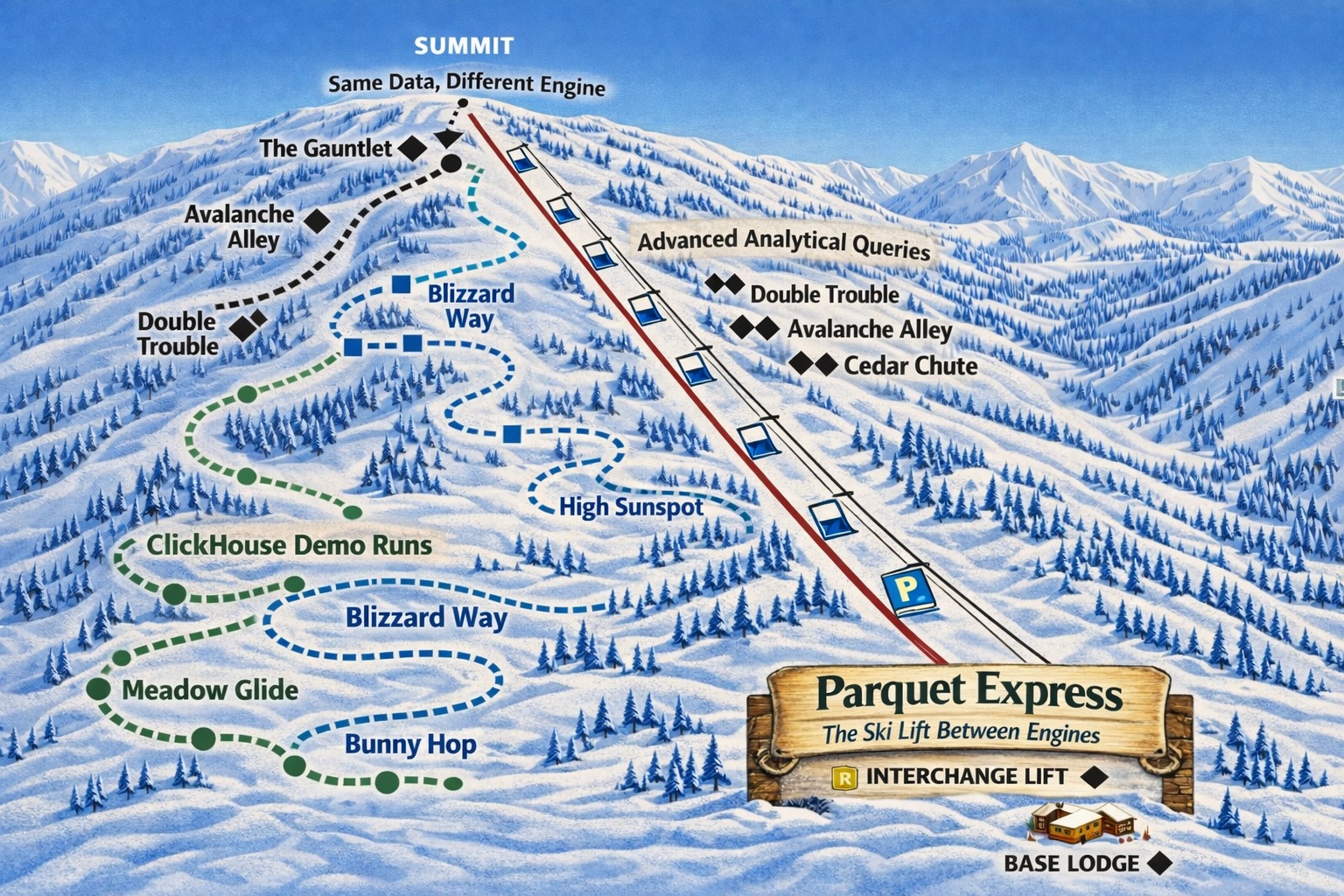

To give a perspective on how the overall performance of Umbra and CedarDB fares, we also include a comparison to Hyper and DuckDB. On this workload with around 100GB of data, our system is on average around 6× faster. Some other systems need significantly rewritten queries, and despite claims otherwise, ClickHouse performs poorly for such a workload with more than one large table. At CedarDB, we strive to build a system that covers all use-cases.

Goetz Graefe pointed out that SQL Server similarly used some bits of the hash table as filters as early as 1998. Apparently this was very effective to avoid disk I/O, like it is very effective now to reduce in-memory work in CedarDB. ↩︎